AI-Powered Robotics for Culvert and Sewer Inspections

Published Mar. 26, 2025

Project Overview

The inspection of sewer and culvert infrastructure has long been a time-consuming and labor-intensive process, often requiring manual assessment in challenging and hazardous environments. However, advancements in deep learning and robotics are transforming the way these critical systems are monitored and maintained.

By deploying state-of-the-art deep learning algorithms in robotic systems, we can automate defect detection in real-time, enhancing accuracy, efficiency, and safety. These intelligent systems not only streamline inspections but also enable proactive maintenance, reducing costs and preventing failures before they become critical.

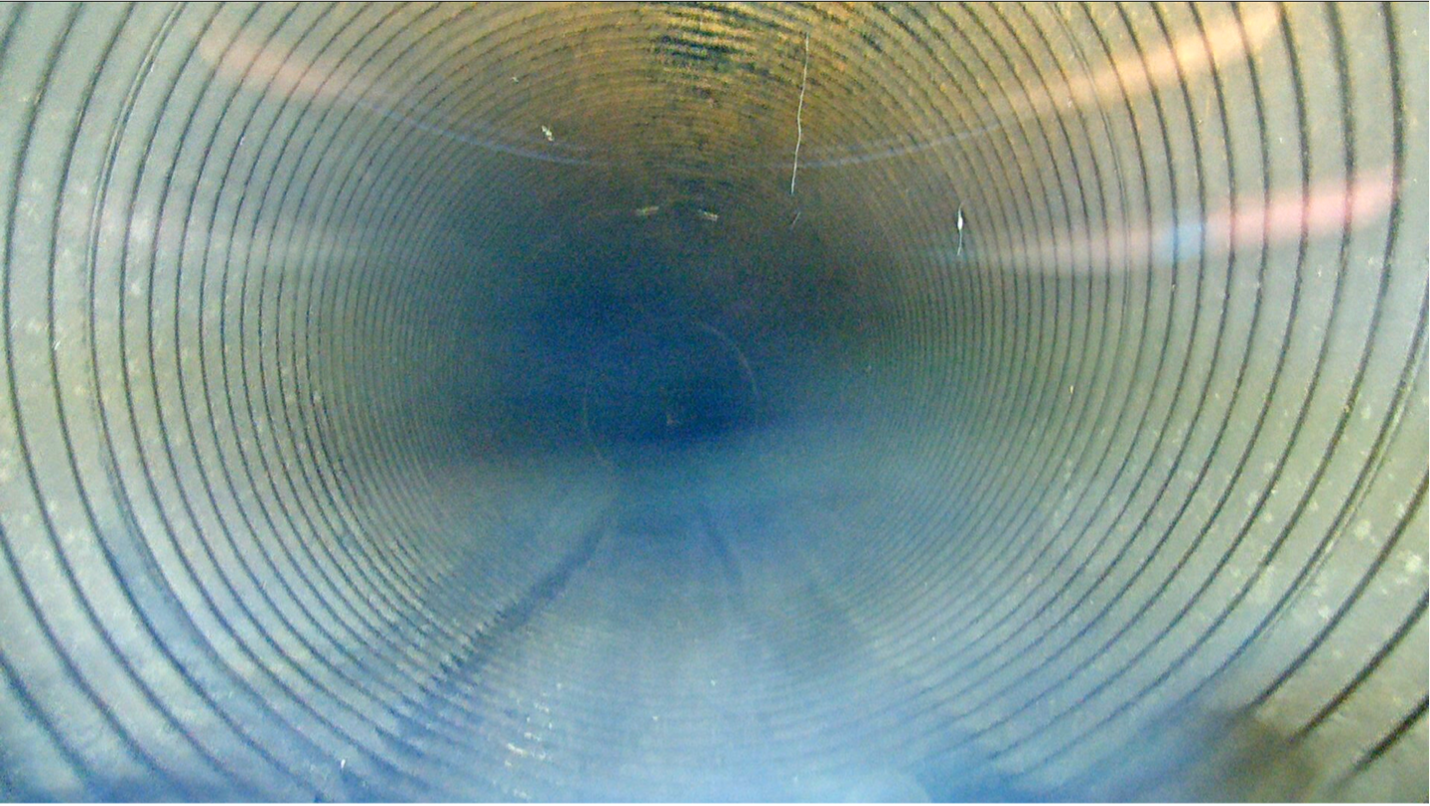

- Robotic system for automated inspections: Advancements in robotics and artificial intelligence, automated inspection systems are transforming the way we monitor and maintain essential infrastructure. Clearpath Jackal is a lightweight, fast, and easy-to-use unmanned ground vehicle (UGV) designed for ROS Noetic. Equipped with a range of advanced sensors, including LIDAR, cameras, and GPS, Jackal enables real-time data collection and precise environment mapping. This robotic system enhances efficiency, accuracy, and safety by autonomously navigating complex terrains and detecting infrastructure deficiencies in sewers and culverts.

- Deployment of AI models on robotic platforms: By running deep learning models, robotic systems can detect and analyze structural deficiencies in real time, reducing the need for manual inspections. For deployment, the deep learning model is first trained and saved locally. A Robot Operating System (ROS) package is then developed to load the pre-trained model onto a GPU for inference, enabling real-time processing. The model's output is efficiently processed and displayed on-screen, with a performance of 20 FPS. This seamless integration of AI models with robotic platforms enables real-time defect detection, data pre-processing, and visualization, ultimately transforming infrastructure inspection into a faster, safer, and more intelligent process.

- Control center for robotic systems: As robotic inspection systems become more complex, a centralized Control Center becomes necessary for efficient monitoring and management. The Control Center enables operation of the Clearpath Jackal robotic system, allowing users to track robot status, deploy AI models, and oversee inspections with precision. Operators can monitor critical modules such as motors, bridges, battery levels, and other essential systems in real time, ensuring optimal performance. The system supports the deployment of AI-powered inspection models like PipeWatch AI for detecting structural deficiencies with high accuracy. A live data visualization interface provides real-time footage from the robot’s camera alongside AI-generated inspection outputs, offering an intuitive inspection experience. Users can initiate, manage, and control the entire inspection process remotely, streamlining operations. Additionally, the Control Center generates detailed inspection reports required for maintenance planning and infrastructure assessment. By integrating real-time monitoring, AI-powered inspections, and automated reporting, the Control Center enhances efficiency, accuracy, and reliability in robotic inspections.

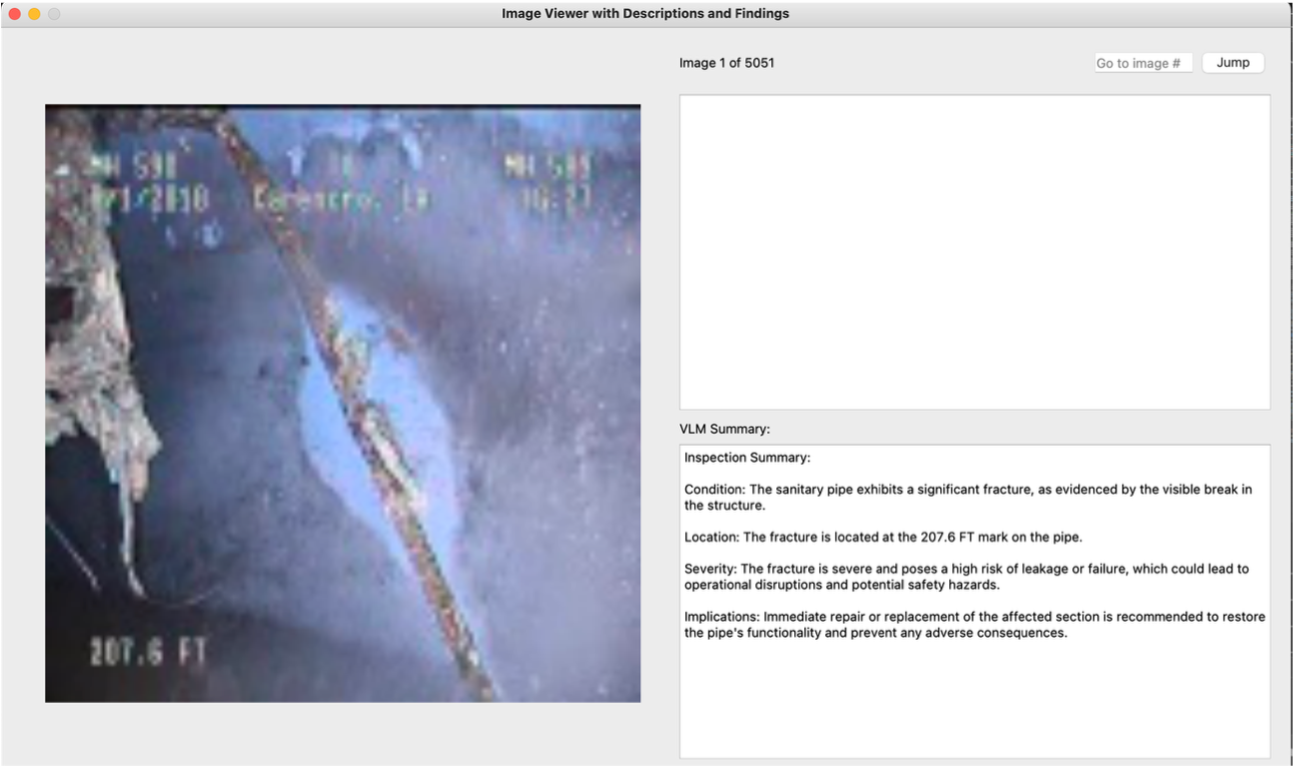

Integration of Vision-Language Models for Context-Aware Inspection:

While current deep learning models excel at detecting and classifying structural defects, they often operate as isolated perception modules, requiring human interpretation of the results. To address this limitation, our ongoing research explores the deployment of vision-language models (VLMs) on robotic platforms for sewer and culvert inspections. VLMs are capable of jointly processing visual data from inspection cameras and textual inputs from structured reports, enabling the generation of detailed, human-readable summaries directly from inspection footage.

In this approach, a pre-trained multimodal model is fine-tuned using domain-specific datasets containing both deficiency images and corresponding inspection notes. When integrated into the robotic workflow, the VLM can:

- Interpret detected deficiencies in context.

- Generate summarized descriptions of detected defects for easy interpretation by the inspector.

- Answer operator queries in natural language based on live visual input (e.g., “Which sections of the culvert show signs of cracking?”).

- This integration significantly reduces enhances interpretability and allows inspection teams to make informed maintenance decisions faster.

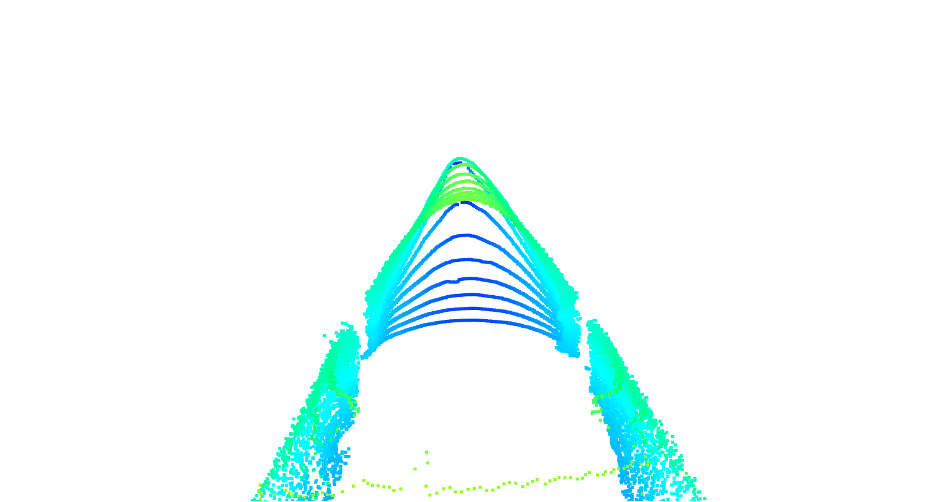

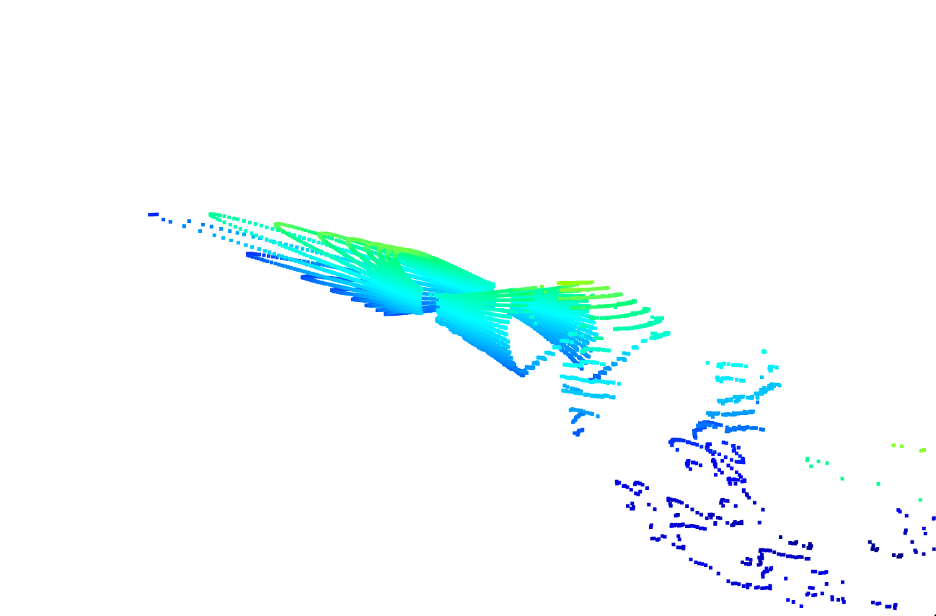

3D LiDAR + 2D RGB Data Fusion for Enhanced Defect Detection

While traditional inspections often rely on either camera images or LiDAR point clouds in isolation, this research explores multi-modal fusion of 3D LiDAR data with 2D RGB imagery.

By synchronizing LiDAR scans with RGB camera frames, a fused spatial-color representation of the environment is created. This allows the AI model to leverage depth information for precise defect localization and RGB texture data for material and surface condition analysis.

The 3D LiDAR provides accurate geometric mapping of sewer and culvert interiors, capturing deformation, cracks, or obstructions in three-dimensional space. The RGB imagery adds high-resolution texture details, enabling identification of discoloration, corrosion, or biological growth that may not be geometrically distinct.

The fusion process improves detection robustness under poor lighting or occluded conditions, where RGB alone may struggle, and enhances classification accuracy in complex, cluttered environments.